Fit the Discrete-Time Vector Autoregressive Model By ID (Adaptive Recovery)

Ivan Jacob Agaloos Pesigan

2026-01-19

Source:vignettes/adaptive-recovery-1000.Rmd

adaptive-recovery-1000.RmdDynamics Description

The Adaptive Recovery process reflects an asymmetric regulatory dynamic between two latent constructs—such as stress and coping—where activation in one system initiates a corrective response in the other. Specifically, stress tends to increase coping responses, while coping reduces subsequent stress, producing a negative feedback loop that promotes stability and recovery.

Individuals differ in the strength and balance of these cross-regulatory influences, leading to variability in how quickly they return to equilibrium after disturbances. The process noise covariance is moderate and negatively correlated, representing compensatory fluctuations where increases in stress are often accompanied by decreases in coping, while measurement error variance is small and symmetric across variables.

This configuration captures a psychologically meaningful stress-response mechanism, characterized by self-correcting dynamics that stabilize the system over time through coordinated but asymmetric influences.

Model

The measurement model is given by where , , and are random variables and , and are model parameters. represents a vector of observed random variables, a vector of latent random variables, and a vector of random measurement errors, at time and individual . denotes a matrix of factor loadings, and the covariance matrix of that is invariant across individuals. In this model, is an identity matrix and is a symmetric matrix.

The dynamic structure is given by where , , and are random variables, and , and are model parameters. Here, is a vector of latent variables at time and individual , represents a vector of latent variables at time and individual , and represents a vector of dynamic noise at time and individual . is a matrix of autoregression and cross regression coefficients for individual , and the covariance matrix of that is invariant across all individuals. In this model, is a symmetric matrix.

Data Generation

Notation

Let be the number of time points and be the number of individuals. We simulate a total of time points per individual, discarding the first as burn-in. The analysis uses the final measurement occasions.

Let the factor loadings matrix be given by

Let the measurement error covariance matrix be given by

Let the initial condition be given by and are functions of and .

Let the intercept vector be normally distributed with the following means and covariance matrix

Let the transition matrix be normally distributed with the following means and covariance matrix

The SimAlphaN and SimBetaN functions from

the simStateSpace package generate random intercept vectors

and transition matrices from the multivariate normal distribution. Note

that the SimBetaN function generates transition matrices

that are weakly stationary with an option to set lower and upper bounds.

The person-specific set-point vector

was derived from the generated

and

.

Let the dynamic process noise be given by

R Function Arguments

n

#> [1] 1000

time

#> [1] 11000

burnin

#> [1] 10000

# first mu0 in the list of length n

mu0[[1]]

#> [1] -0.6409103 1.8437611

# first sigma0 in the list of length n

sigma0[[1]]

#> [,1] [,2]

#> [1,] 0.6803744 -0.2369735

#> [2,] -0.2369735 0.3548975

# first sigma0_l in the list of length n

sigma0_l[[1]] # sigma0_l <- t(chol(sigma0))

#> [,1] [,2]

#> [1,] 0.8248481 0.0000000

#> [2,] -0.2872935 0.5218811

# first alpha in the list of length n

alpha[[1]]

#> [1] 0.5941503 0.6684587

# first beta in the list of length n

beta[[1]]

#> [,1] [,2]

#> [1,] 0.5736830 -0.4704412

#> [2,] 0.1415191 0.6866418

# first psi in the list of length n

psi[[1]]

#> [,1] [,2]

#> [1,] 0.25 -0.10

#> [2,] -0.10 0.22

psi_l[[1]] # psi_l <- t(chol(psi))

#> [,1] [,2]

#> [1,] 0.5 0.0000000

#> [2,] -0.2 0.4242641

nu

#> [[1]]

#> [1] 0 0

lambda

#> [[1]]

#> [,1] [,2]

#> [1,] 1 0

#> [2,] 0 1

theta

#> [[1]]

#> [,1] [,2]

#> [1,] 0.5 0.0

#> [2,] 0.0 0.5

theta_l # theta_l <- t(chol(theta))

#> [[1]]

#> [,1] [,2]

#> [1,] 0.7071068 0.0000000

#> [2,] 0.0000000 0.7071068

# first mu_eta (set-point) in the list of length n

mu_eta[[1]]

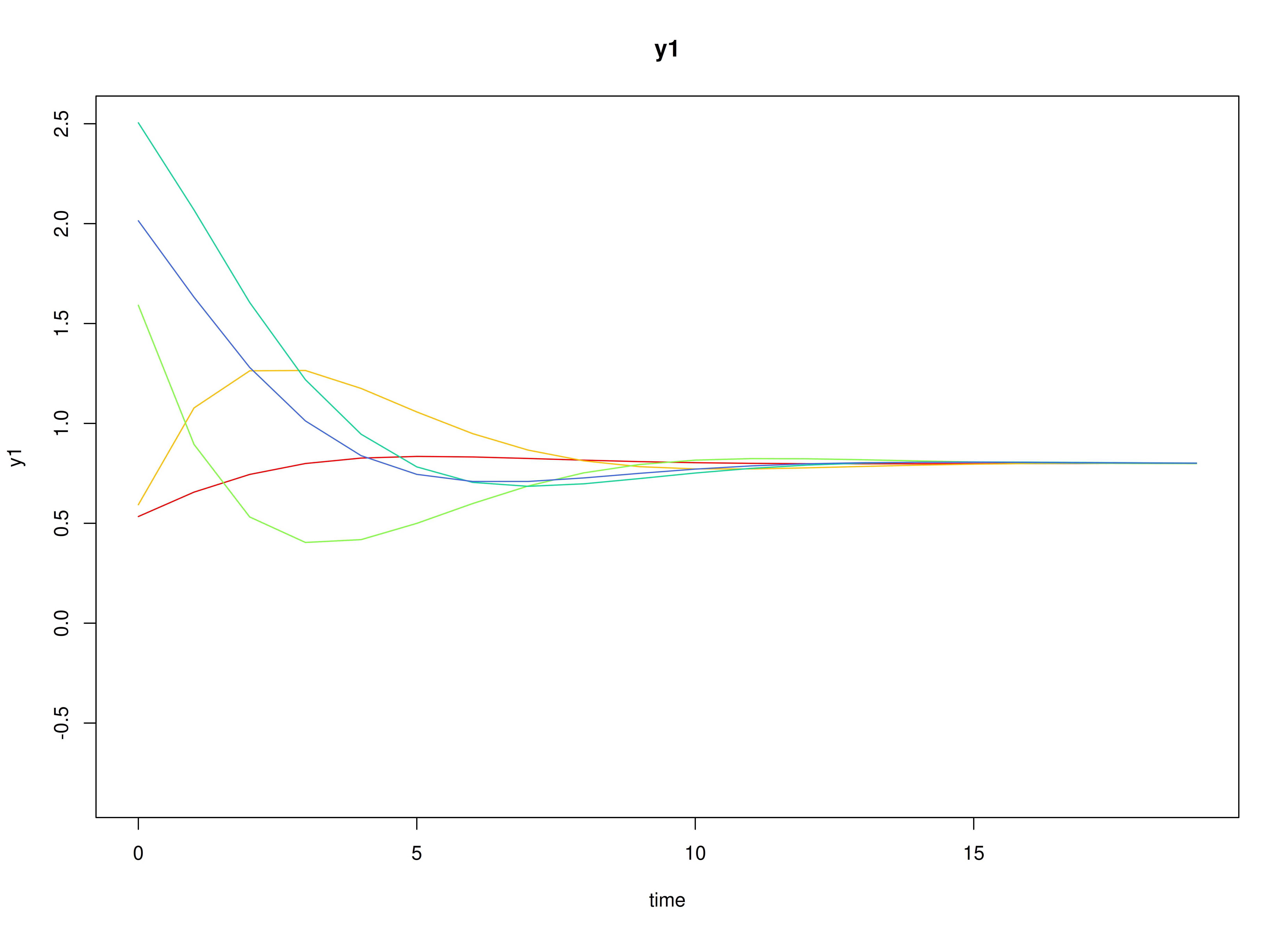

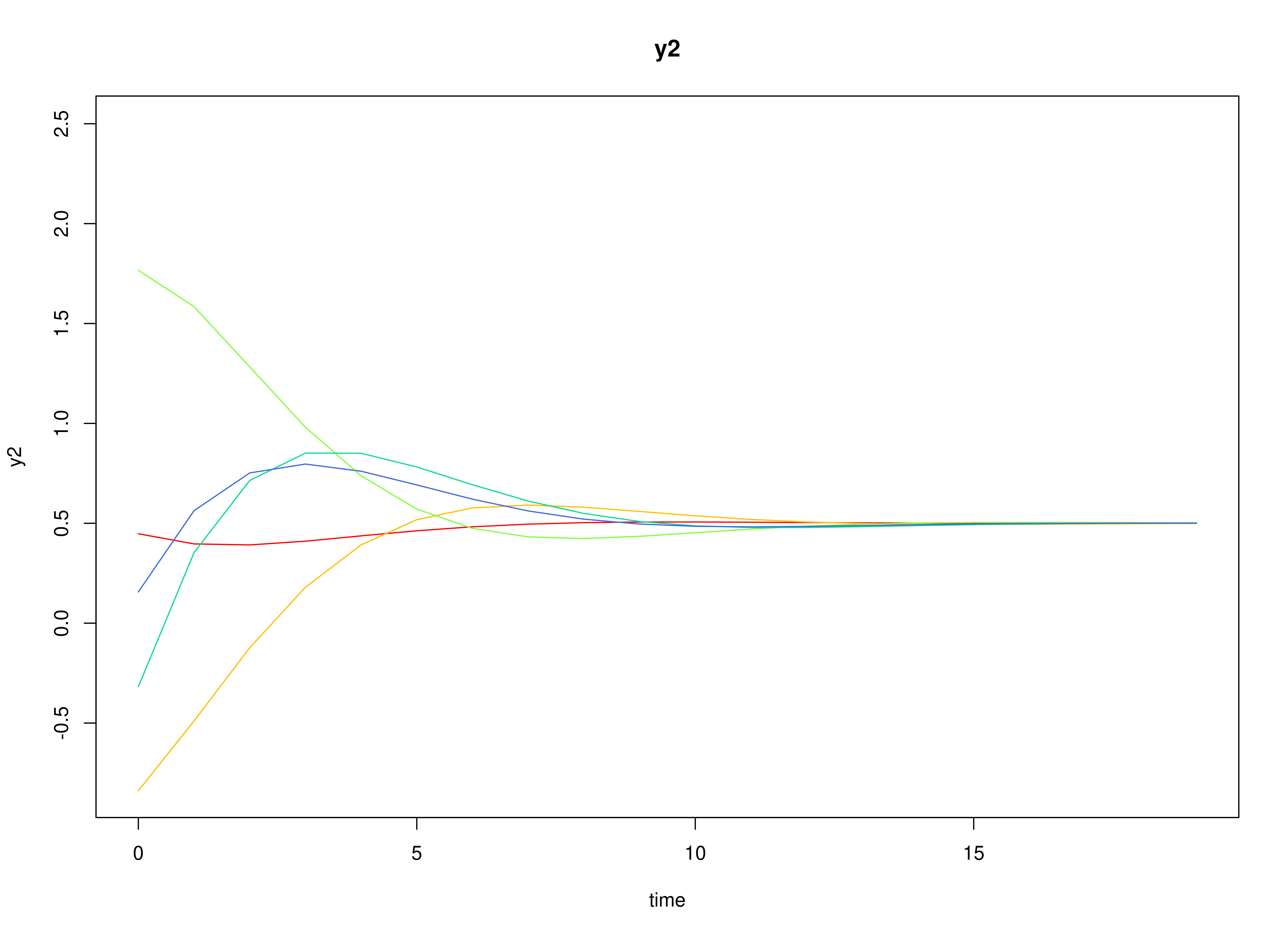

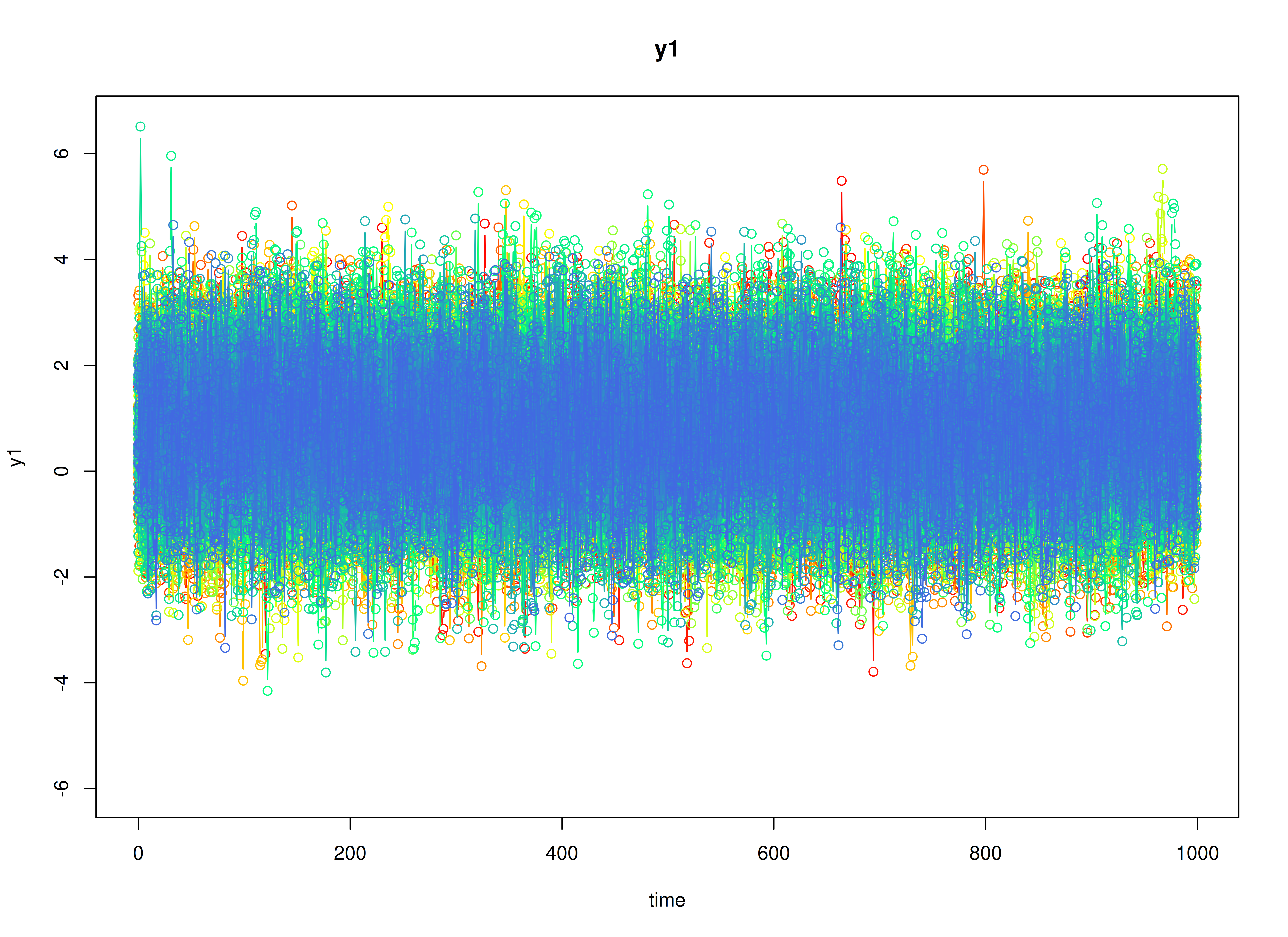

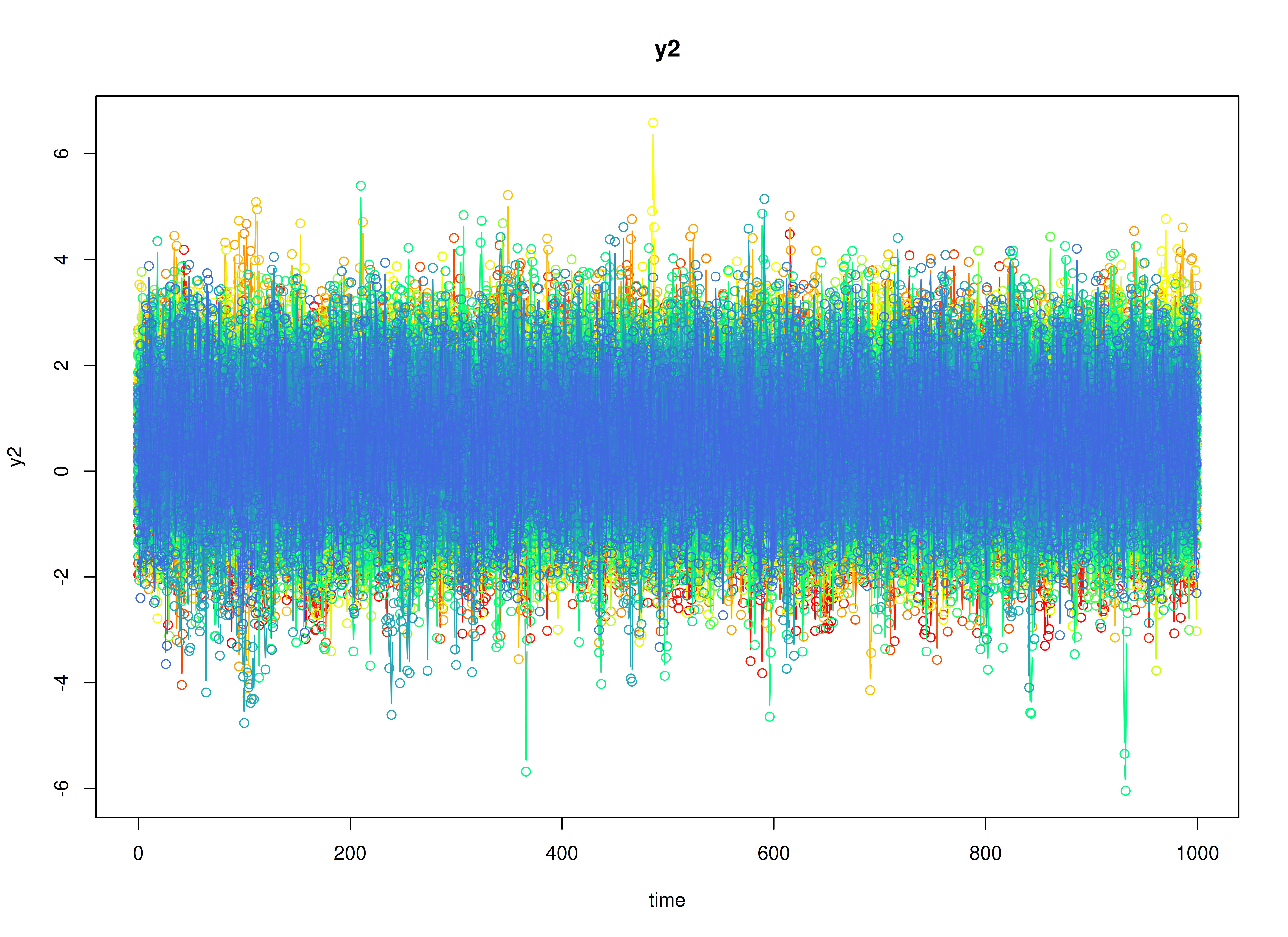

#> [1] -0.6409103 1.8437611Visualizing the Dynamics Without Process Noise and Measurement Error (n = 5 with Different Initial Condition)

Using the SimSSMIVary Function from the

simStateSpace Package to Simulate Data

library(simStateSpace)

sim <- SimSSMIVary(

n = n,

time = time,

mu0 = mu0,

sigma0_l = sigma0_l,

alpha = alpha,

beta = beta,

psi_l = psi_l,

nu = nu,

lambda = lambda,

theta_l = theta_l

)

data <- as.data.frame(sim, burnin = burnin)

head(data)

#> id time y1 y2

#> 1 1 0 -1.3784256 3.4783987

#> 2 1 1 -1.7787675 2.2002487

#> 3 1 2 0.1032886 1.2379348

#> 4 1 3 -0.2237299 0.8511623

#> 5 1 4 -1.1157617 2.0194513

#> 6 1 5 -0.1886396 1.5396721

plot(sim, burnin = burnin)

Model Fitting

The FitDTVARMxID function fits a DT-VAR model on each

individual

.

To set up the estimation, we first provide starting

values for each parameter matrix.

Set-Point (mu_eta)

The set-point vector is initialized with starting values.

mu_eta_values <- mu_etaAutoregressive Parameters (beta)

We initialize the autoregressive coefficient matrix with the true values used in simulation.

beta_values <- betaLDL’-parameterized covariance matrices

Covariances such as psi and theta are

estimated using the LDL’ decomposition of a positive definite covariance

matrix. The decomposition expresses a covariance matrix

as

where: -

is a strictly lower-triangular matrix of free parameters

(l_mat_strict),

-

is the identity matrix,

-

is an unconstrained vector,

-

ensures strictly positive diagonal entries.

The LDL() function extracts this decomposition from a

positive definite covariance matrix. It returns:

- d_uc: unconstrained diagonal parameters, equal to

InvSoftplus(d_vec),

- d_vec: diagonal entries, equal to

Softplus(d_uc),

- l_mat_strict: the strictly lower-triangular factor.

sigma <- matrix(

data = c(1.0, 0.5, 0.5, 1.0),

nrow = 2,

ncol = 2

)

ldl_sigma <- LDL(sigma)

d_uc <- ldl_sigma$d_uc

l_mat_strict <- ldl_sigma$l_mat_strict

I <- diag(2)

sigma_reconstructed <- (l_mat_strict + I) %*% diag(log1p(exp(d_uc)), 2) %*% t(l_mat_strict + I)

sigma_reconstructed

#> [,1] [,2]

#> [1,] 1.0 0.5

#> [2,] 0.5 1.0Process Noise Covariance Matrix (psi)

Starting values for the process noise covariance matrix are given below, with corresponding LDL’ parameters.

psi_values <- psi[[1]]

ldl_psi_values <- LDL(psi_values)

psi_d_values <- ldl_psi_values$d_uc

psi_l_values <- ldl_psi_values$l_mat_strict

psi_d_values

#> [1] -1.258692 -1.623449

psi_l_values

#> [,1] [,2]

#> [1,] 0.0 0

#> [2,] -0.4 0Measurement Error Covariance Matrix (theta)

Starting values for the measurement error covariance matrix are given below, with corresponding LDL’ parameters.

theta_values <- theta[[1]]

ldl_theta_values <- LDL(theta_values)

theta_d_values <- ldl_theta_values$d_uc

theta_l_values <- ldl_theta_values$l_mat_strict

theta_d_values

#> [1] -0.4327521 -0.4327521

theta_l_values

#> [,1] [,2]

#> [1,] 0 0

#> [2,] 0 0Initial mean vector (mu_0) and covariance matrix

(sigma_0)

The initial mean vector

and covariance matrix

are fixed using mu0 and sigma0.

mu0_values <- mu0

FitDTVARMxID

fit <- FitDTVARMxID(

data = data,

observed = c("y1", "y2"),

id = "id",

center = TRUE,

mu_eta_values = mu_eta_values,

beta_values = beta_values,

psi_d_values = psi_d_values,

psi_l_values = psi_l_values,

theta_d_values = theta_d_values,

mu0_values = mu0_values,

sigma0_d_values = sigma0_d_values,

sigma0_l_values = sigma0_l_values,

ncores = parallel::detectCores()

)Parameter estimates

head(summary(fit))

#> beta_1_1 beta_2_1 beta_1_2 beta_2_2 mu_eta_1_1

#> FitDTVARMxID_DTVAR_ID1.Rds 0.4354550 0.17318034 -0.8617860 0.7654212 -0.5891073

#> FitDTVARMxID_DTVAR_ID2.Rds 0.3723701 0.60873921 -0.3355289 0.9928778 0.1821793

#> FitDTVARMxID_DTVAR_ID3.Rds 0.5090962 0.43699533 -0.1897852 0.8883645 0.3937449

#> FitDTVARMxID_DTVAR_ID4.Rds 0.6211980 0.17807858 -0.1433649 0.5820667 1.0160762

#> FitDTVARMxID_DTVAR_ID5.Rds 0.6419239 0.22464598 -0.2416318 0.7745309 -0.8752583

#> FitDTVARMxID_DTVAR_ID6.Rds 0.4912871 0.02894239 -0.2063216 0.6044975 0.9047161

#> mu_eta_2_1 psi_l_2_1 psi_d_1_1 psi_d_2_1

#> FitDTVARMxID_DTVAR_ID1.Rds 1.785867 -0.3603330 -1.838030 -1.802444

#> FitDTVARMxID_DTVAR_ID2.Rds 6.061074 -0.4465356 -1.254230 -2.289462

#> FitDTVARMxID_DTVAR_ID3.Rds 4.190544 -0.3889435 -1.583936 -1.441315

#> FitDTVARMxID_DTVAR_ID4.Rds 4.097153 -0.6286424 -1.208268 -1.613658

#> FitDTVARMxID_DTVAR_ID5.Rds 3.992908 -0.4504113 -1.163153 -1.758080

#> FitDTVARMxID_DTVAR_ID6.Rds 2.626440 -0.1484303 -1.025647 -1.244879

#> theta_d_1_1 theta_d_2_1

#> FitDTVARMxID_DTVAR_ID1.Rds -0.4881215 -0.2447964

#> FitDTVARMxID_DTVAR_ID2.Rds -0.3589143 -0.2971942

#> FitDTVARMxID_DTVAR_ID3.Rds -0.3636831 -0.5559566

#> FitDTVARMxID_DTVAR_ID4.Rds -0.2978613 -0.4055079

#> FitDTVARMxID_DTVAR_ID5.Rds -0.4315600 -0.5602322

#> FitDTVARMxID_DTVAR_ID6.Rds -0.7717579 -0.4389711Proportion of converged cases

converged(

fit,

theta_tol = 0.01,

prop = TRUE

)

#> [1] 0.975Fixed-Effect Meta-Analysis of Measurement Error

When fitting DT-VAR models per person, separating process noise () from measurement error () can be unstable for some individuals. To stabilize inference, we first pool the person-level estimates from only the converged fits using a fixed-effect meta-analysis. This yields a high-precision estimate of the common measurement-error covariance that we will then hold fixed in a second pass of model fitting.

What the code does: - Selects individuals that converged and whose

diagonals exceed a small threshold (theta_tol), filtering

out near-zero or ill-conditioned solutions. - Extracts each person’s

LDL’ diagonal parameters for

and their sampling covariance matrices. - Computes the

inverse-variance-weighted pooled estimate (fixed effect), returning it

on the same LDL’ parameterization used by

FitDTVARMxID().

library(metaVAR)

fixed_theta <- MetaVARMx(

fit,

random = FALSE, # TRUE by default

effects = FALSE, # TRUE by default

cov_meas = TRUE, # FALSE by default

theta_tol = 0.01,

ncores = parallel::detectCores()

)You can read summary(fixed_theta) as providing the

pooled (fixed) measurement-error scale that is common across persons. If

individual instruments truly share the same reliability structure,

fixing

to this pooled value improves stability and often reduces bias in the

dynamic parameters.

Note: Fixed-effect pooling assumes a common across individuals.

coef(fixed_theta)

#> alpha_1_1 alpha_2_1

#> -0.3932456 -0.4085644

summary(fixed_theta)

#> [1] 0

#> Call:

#> MetaVARMx(object = fit, random = FALSE, effects = FALSE, cov_meas = TRUE,

#> theta_tol = 0.01, ncores = parallel::detectCores())

#>

#> CI type = "normal"

#> est se z p 2.5% 97.5%

#> alpha[1,1] -0.3932 0.0041 -94.8567 0 -0.4014 -0.3851

#> alpha[2,1] -0.4086 0.0041 -98.5010 0 -0.4167 -0.4004

theta_d_values <- coef(fixed_theta)Refit the model with fixed measurement error covariance matrix

We refit the individual models using the pooled as a fixed measurement-error covariance matrix.

fit <- FitDTVARMxID(

data = data,

observed = c("y1", "y2"),

id = "id",

center = TRUE,

mu_eta_values = mu_eta_values,

beta_values = beta_values,

psi_d_values = psi_d_values,

psi_l_values = psi_l_values,

theta_fixed = TRUE,

theta_d_values = theta_d_values,

mu0_values = mu0_values,

sigma0_d_values = sigma0_d_values,

sigma0_l_values = sigma0_l_values,

ncores = parallel::detectCores()

)With fixed, the re-estimation focuses on the dynamic structure (, , ). In practice, this often increases the proportion of converged fits and yields more stable cross-lag estimates.

Proportion of converged cases

converged(

fit,

prop = TRUE

)

#> [1] 1Random-Effects Meta-Analysis of Person-Specific Dynamics and Means

Having stabilized , we synthesize the person-specific estimates to recover population-level effects and their between-person variability. We use a random-effects model so the pooled mean reflects both within-person estimation uncertainty and between-person heterogeneity.

random <- MetaVARMx(

fit,

effects = TRUE,

set_point = TRUE,

robust_v = FALSE,

robust = TRUE,

ncores = parallel::detectCores()

)

summary(random)

#> [1] 0

#> Call:

#> MetaVARMx(object = fit, effects = TRUE, set_point = TRUE, robust_v = FALSE,

#> robust = TRUE, ncores = parallel::detectCores())

#>

#> CI type = "normal"

#> est se z p 2.5% 97.5%

#> alpha[1,1] 0.4551 0.0369 12.3290 0.0000 0.3827 0.5274

#> alpha[2,1] 2.5637 0.0768 33.4014 0.0000 2.4133 2.7142

#> alpha[3,1] 0.6104 0.0048 126.7204 0.0000 0.6010 0.6198

#> alpha[4,1] 0.2560 0.0045 56.9874 0.0000 0.2472 0.2648

#> alpha[5,1] -0.2933 0.0047 -62.7708 0.0000 -0.3024 -0.2841

#> alpha[6,1] 0.7055 0.0047 150.5207 0.0000 0.6964 0.7147

#> tau_sqr[1,1] 1.3597 0.0611 22.2544 0.0000 1.2400 1.4795

#> tau_sqr[2,1] -0.5171 0.0913 -5.6638 0.0000 -0.6960 -0.3382

#> tau_sqr[3,1] 0.0232 0.0056 4.1445 0.0000 0.0122 0.0341

#> tau_sqr[4,1] 0.0005 0.0052 0.1026 0.9183 -0.0096 0.0106

#> tau_sqr[5,1] 0.0367 0.0054 6.7934 0.0000 0.0261 0.0473

#> tau_sqr[6,1] -0.0574 0.0056 -10.2249 0.0000 -0.0684 -0.0464

#> tau_sqr[2,2] 5.8883 0.2643 22.2771 0.0000 5.3702 6.4063

#> tau_sqr[3,2] 0.0193 0.0116 1.6656 0.0958 -0.0034 0.0421

#> tau_sqr[4,2] 0.0323 0.0109 2.9698 0.0030 0.0110 0.0536

#> tau_sqr[5,2] 0.1253 0.0114 10.9545 0.0000 0.1029 0.1477

#> tau_sqr[6,2] 0.1689 0.0121 13.9952 0.0000 0.1452 0.1925

#> tau_sqr[3,3] 0.0203 0.0010 19.4258 0.0000 0.0182 0.0223

#> tau_sqr[4,3] 0.0070 0.0007 9.7506 0.0000 0.0056 0.0084

#> tau_sqr[5,3] -0.0012 0.0007 -1.6789 0.0932 -0.0025 0.0002

#> tau_sqr[6,3] -0.0012 0.0007 -1.7696 0.0768 -0.0026 0.0001

#> tau_sqr[4,4] 0.0176 0.0009 19.4539 0.0000 0.0158 0.0193

#> tau_sqr[5,4] -0.0012 0.0006 -1.8426 0.0654 -0.0025 0.0001

#> tau_sqr[6,4] -0.0016 0.0006 -2.4664 0.0136 -0.0029 -0.0003

#> tau_sqr[5,5] 0.0179 0.0009 18.9322 0.0000 0.0160 0.0197

#> tau_sqr[6,5] 0.0075 0.0007 10.5852 0.0000 0.0061 0.0089

#> tau_sqr[6,6] 0.0184 0.0010 19.1763 0.0000 0.0165 0.0203

#> i_sqr[1,1] 0.9987 0.0001 17355.3529 0.0000 0.9986 0.9988

#> i_sqr[2,1] 0.9998 0.0000 101397.0457 0.0000 0.9998 0.9998

#> i_sqr[3,1] 0.9463 0.0026 361.5838 0.0000 0.9411 0.9514

#> i_sqr[4,1] 0.9553 0.0022 443.4095 0.0000 0.9510 0.9595

#> i_sqr[5,1] 0.9486 0.0024 389.0382 0.0000 0.9439 0.9534

#> i_sqr[6,1] 0.9650 0.0017 582.2637 0.0000 0.9617 0.9682Normal Theory Confidence Intervals

confint(random, level = 0.95, lb = FALSE)

#> 2.5 % 97.5 %

#> alpha[1,1] 0.382739785 5.274317e-01

#> alpha[2,1] 2.413288001 2.714162e+00

#> alpha[3,1] 0.600964249 6.198463e-01

#> alpha[4,1] 0.247216344 2.648270e-01

#> alpha[5,1] -0.302427411 -2.841132e-01

#> alpha[6,1] 0.696359343 7.147335e-01

#> tau_sqr[1,1] 1.239993658 1.479502e+00

#> tau_sqr[2,1] -0.696024774 -3.381504e-01

#> tau_sqr[3,1] 0.012203814 3.410266e-02

#> tau_sqr[4,1] -0.009583580 1.064267e-02

#> tau_sqr[5,1] 0.026120352 4.730393e-02

#> tau_sqr[6,1] -0.068402668 -4.639715e-02

#> tau_sqr[2,2] 5.370212295 6.406328e+00

#> tau_sqr[3,2] -0.003419562 4.211302e-02

#> tau_sqr[4,2] 0.010979585 5.360109e-02

#> tau_sqr[5,2] 0.102885064 1.477234e-01

#> tau_sqr[6,2] 0.145239954 1.925453e-01

#> tau_sqr[3,3] 0.018225741 2.231622e-02

#> tau_sqr[4,3] 0.005588043 8.399720e-03

#> tau_sqr[5,3] -0.002530219 1.954092e-04

#> tau_sqr[6,3] -0.002600107 1.327059e-04

#> tau_sqr[4,4] 0.015782911 1.931945e-02

#> tau_sqr[5,4] -0.002468608 7.620572e-05

#> tau_sqr[6,4] -0.002876701 -3.291126e-04

#> tau_sqr[5,5] 0.016036043 1.973975e-02

#> tau_sqr[6,5] 0.006117691 8.898010e-03

#> tau_sqr[6,6] 0.016527841 2.029101e-02

#> i_sqr[1,1] 0.998604912 9.988305e-01

#> i_sqr[2,1] 0.999760965 9.997996e-01

#> i_sqr[3,1] 0.941141587 9.514001e-01

#> i_sqr[4,1] 0.951039346 9.594843e-01

#> i_sqr[5,1] 0.943869824 9.534284e-01

#> i_sqr[6,1] 0.961739516 9.682360e-01

confint(random, level = 0.99, lb = FALSE)

#> 0.5 % 99.5 %

#> alpha[1,1] 0.360007044 5.501644e-01

#> alpha[2,1] 2.366017199 2.761433e+00

#> alpha[3,1] 0.597997660 6.228129e-01

#> alpha[4,1] 0.244449508 2.675938e-01

#> alpha[5,1] -0.305304783 -2.812358e-01

#> alpha[6,1] 0.693472553 7.176203e-01

#> tau_sqr[1,1] 1.202364105 1.517132e+00

#> tau_sqr[2,1] -0.752250918 -2.819242e-01

#> tau_sqr[3,1] 0.008763255 3.754322e-02

#> tau_sqr[4,1] -0.012761354 1.382044e-02

#> tau_sqr[5,1] 0.022792171 5.063211e-02

#> tau_sqr[6,1] -0.071859985 -4.293984e-02

#> tau_sqr[2,2] 5.207426779 6.569113e+00

#> tau_sqr[3,2] -0.010573249 4.926670e-02

#> tau_sqr[4,2] 0.004283261 6.029741e-02

#> tau_sqr[5,2] 0.095840455 1.547680e-01

#> tau_sqr[6,2] 0.137807742 1.999775e-01

#> tau_sqr[3,3] 0.017583081 2.295888e-02

#> tau_sqr[4,3] 0.005146296 8.841467e-03

#> tau_sqr[5,3] -0.002958446 6.236364e-04

#> tau_sqr[6,3] -0.003029463 5.620619e-04

#> tau_sqr[4,4] 0.015227280 1.987508e-02

#> tau_sqr[5,4] -0.002868427 4.760249e-04

#> tau_sqr[6,4] -0.003276956 7.114253e-05

#> tau_sqr[5,5] 0.015454149 2.032164e-02

#> tau_sqr[6,5] 0.005680871 9.334829e-03

#> tau_sqr[6,6] 0.015936605 2.088224e-02

#> i_sqr[1,1] 0.998569472 9.988659e-01

#> i_sqr[2,1] 0.999754892 9.998057e-01

#> i_sqr[3,1] 0.939529857 9.530118e-01

#> i_sqr[4,1] 0.949712553 9.608111e-01

#> i_sqr[5,1] 0.942368069 9.549301e-01

#> i_sqr[6,1] 0.960718840 9.692567e-01Robust Confidence Intervals

confint(random, level = 0.95, lb = FALSE, robust = TRUE)

#> 2.5 % 97.5 %

#> alpha[1,1] 0.382569928 5.276015e-01

#> alpha[2,1] 2.412705804 2.714745e+00

#> alpha[3,1] 0.600976455 6.198341e-01

#> alpha[4,1] 0.247172965 2.648704e-01

#> alpha[5,1] -0.302728782 -2.838118e-01

#> alpha[6,1] 0.696076904 7.150159e-01

#> tau_sqr[1,1] 1.043429463 1.676067e+00

#> tau_sqr[2,1] -1.004187692 -2.998745e-02

#> tau_sqr[3,1] 0.010631414 3.567506e-02

#> tau_sqr[4,1] -0.010097914 1.115700e-02

#> tau_sqr[5,1] 0.025873522 4.755076e-02

#> tau_sqr[6,1] -0.071712154 -4.308767e-02

#> tau_sqr[2,2] 4.135404398 7.641136e+00

#> tau_sqr[3,2] -0.001947614 4.064107e-02

#> tau_sqr[4,2] 0.011882391 5.269828e-02

#> tau_sqr[5,2] 0.099505921 1.511025e-01

#> tau_sqr[6,2] 0.136967417 2.008179e-01

#> tau_sqr[3,3] 0.018258977 2.228298e-02

#> tau_sqr[4,3] 0.005592482 8.395281e-03

#> tau_sqr[5,3] -0.002481984 1.471749e-04

#> tau_sqr[6,3] -0.002522937 5.553628e-05

#> tau_sqr[4,4] 0.015822486 1.927988e-02

#> tau_sqr[5,4] -0.002457828 6.542526e-05

#> tau_sqr[6,4] -0.002784668 -4.211454e-04

#> tau_sqr[5,5] 0.016066790 1.970900e-02

#> tau_sqr[6,5] 0.006111479 8.904222e-03

#> tau_sqr[6,6] 0.016452707 2.036614e-02

#> i_sqr[1,1] 0.998419785 9.990156e-01

#> i_sqr[2,1] 0.999714050 9.998465e-01

#> i_sqr[3,1] 0.941225199 9.513165e-01

#> i_sqr[4,1] 0.951078915 9.594447e-01

#> i_sqr[5,1] 0.943868351 9.534298e-01

#> i_sqr[6,1] 0.961735979 9.682396e-01

confint(random, level = 0.99, lb = FALSE, robust = TRUE)

#> 0.5 % 99.5 %

#> alpha[1,1] 0.359783814 5.503876e-01

#> alpha[2,1] 2.365252063 2.762198e+00

#> alpha[3,1] 0.598013701 6.227969e-01

#> alpha[4,1] 0.244392499 2.676509e-01

#> alpha[5,1] -0.305700852 -2.808398e-01

#> alpha[6,1] 0.693101365 7.179915e-01

#> tau_sqr[1,1] 0.944034964 1.775461e+00

#> tau_sqr[2,1] -1.157245642 1.230705e-01

#> tau_sqr[3,1] 0.006696771 3.960971e-02

#> tau_sqr[4,1] -0.013437303 1.449639e-02

#> tau_sqr[5,1] 0.022467782 5.095650e-02

#> tau_sqr[6,1] -0.076209386 -3.859044e-02

#> tau_sqr[2,2] 3.584614119 8.191926e+00

#> tau_sqr[3,2] -0.008638781 4.733224e-02

#> tau_sqr[4,2] 0.005469750 5.911092e-02

#> tau_sqr[5,2] 0.091399508 1.592089e-01

#> tau_sqr[6,2] 0.126935786 2.108495e-01

#> tau_sqr[3,3] 0.017626760 2.291520e-02

#> tau_sqr[4,3] 0.005152131 8.835632e-03

#> tau_sqr[5,3] -0.002895055 5.602457e-04

#> tau_sqr[6,3] -0.002928045 4.606438e-04

#> tau_sqr[4,4] 0.015279291 1.982307e-02

#> tau_sqr[5,4] -0.002854259 4.618570e-04

#> tau_sqr[6,4] -0.003156004 -4.980906e-05

#> tau_sqr[5,5] 0.015494557 2.028123e-02

#> tau_sqr[6,5] 0.005672707 9.342993e-03

#> tau_sqr[6,6] 0.015837861 2.098099e-02

#> i_sqr[1,1] 0.998326174 9.991092e-01

#> i_sqr[2,1] 0.999693236 9.998673e-01

#> i_sqr[3,1] 0.939639742 9.529019e-01

#> i_sqr[4,1] 0.949764555 9.607591e-01

#> i_sqr[5,1] 0.942366133 9.549321e-01

#> i_sqr[6,1] 0.960714192 9.692614e-01Profile-Likelihood Confidence Intervals

confint(random, level = 0.95, lb = TRUE)

#> Error in `[.data.frame`:

#> ! undefined columns selected

confint(random, level = 0.99, lb = TRUE)

#> Error in `[.data.frame`:

#> ! undefined columns selected- The fixed part of the random-effects model gives pooled means .

- The random part yields between-person covariances () quantifying heterogeneity in set-point () and dynamics () across individuals.

means <- extract(random, what = "alpha")

means

#> [1] 0.4550857 2.5637252 0.6104053 0.2560217 -0.2932703 0.7055464

covariances <- extract(random, what = "tau_sqr")

covariances

#> [,1] [,2] [,3] [,4] [,5]

#> [1,] 1.3597480348 -0.51708757 0.023153239 0.0005295449 0.036712139

#> [2,] -0.5170875722 5.88826996 0.019346727 0.0322903374 0.125304219

#> [3,] 0.0231532390 0.01934673 0.020270978 0.0069938814 -0.001167405

#> [4,] 0.0005295449 0.03229034 0.006993881 0.0175511823 -0.001196201

#> [5,] 0.0367121386 0.12530422 -0.001167405 -0.0011962012 0.017887896

#> [6,] -0.0573999114 0.16889264 -0.001233700 -0.0016029067 0.007507850

#> [,6]

#> [1,] -0.057399911

#> [2,] 0.168892639

#> [3,] -0.001233700

#> [4,] -0.001602907

#> [5,] 0.007507850

#> [6,] 0.018409425Finally, we compare the meta-analytic population estimates to the known generating values.

pop_mean

#> [1] 0.4408869 2.6095167 0.5990229 0.2511529 -0.3048517 0.6895688

pop_cov

#> [,1] [,2] [,3] [,4] [,5]

#> [1,] 1.406089868 -0.53172453 2.334149e-02 1.792627e-03 3.819205e-02

#> [2,] -0.531724531 7.32735340 3.345138e-02 3.769325e-02 1.504922e-01

#> [3,] 0.023341485 0.03345138 2.380050e-02 9.249947e-03 -4.466312e-05

#> [4,] 0.001792627 0.03769325 9.249947e-03 1.909528e-02 2.736111e-04

#> [5,] 0.038192047 0.15049219 -4.466312e-05 2.736111e-04 1.885667e-02

#> [6,] -0.061114643 0.19748361 -5.601484e-04 1.248519e-05 8.802218e-03

#> [,6]

#> [1,] -6.111464e-02

#> [2,] 1.974836e-01

#> [3,] -5.601484e-04

#> [4,] 1.248519e-05

#> [5,] 8.802218e-03

#> [6,] 2.238834e-02Summary

This vignette demonstrates a two-stage hierarchical estimation

approach for dynamic systems: 1. individual-level DT-VAR estimation with

stabilized measurement error, and

2. population-level meta-analysis of person-specific dynamics and

means.